Will Google TurboQuant lower RAM prices? Google TurboQuant is a revolutionary dynamic quantization technology released in early 2026 that shrinks Large Language Models (LLMs) from 16-bit to as low as 2-bit or 1.5-bit precision with negligible intelligence loss.

By allowing a 70B parameter model to run on just 12GB of VRAM instead of 80GB, TurboQuant directly challenges the hardware monopoly held by memory manufacturers. While it significantly lowers the entry barrier for Local AI, the resulting surge in AI adoption may counteract the drop in memory demand, keeping DDR5 and HBM prices volatile throughout 2026.

What is Google TurboQuant? The "Compression Magic" Behind Local AI

In the 2026 hardware landscape, "Quantization" is no longer just a buzzword—it is a necessity. TurboQuant acts like high-fidelity video compression for AI weights:

Extreme Precision Reduction

Traditionally, AI models use FP16 (16 bits per parameter). TurboQuant utilizes neural redundancy to compress these to 2 bits, effectively reducing the memory footprint by 8x.

Dynamic Weight Compensation

Unlike static quantization which makes AI "dumber," TurboQuant analyzes context in real-time, preserving high precision for critical keywords while aggressively compressing filler data.

Hardware Liberation

This tech allows mid-range RTX 50-series GPUs or standard 32GB DDR5 kits to perform like enterprise-grade H100 clusters.

Market Impact: Will TurboQuant Actually Drop RAM Prices?

The 2026 Memory Crisis is driven by the gap between AI demand and manufacturing capacity. TurboQuant introduces a "Software Alternative" to buying more hardware:

The Bear Case for Prices: Demand Destruction

If enterprises can run their proprietary AI on 32GB CUDIMM kits instead of 128GB servers, the massive procurement orders from AI giants (the primary driver of 2026 price hikes) will plummet. This could lead to a surplus of DDR5 and NAND Flash, forcing prices down for the average consumer.

The Bull Case for Prices: The Jevons Paradox

Economic history shows that when a resource becomes more efficient to use, we often use more of it. TurboQuant makes AI so accessible that millions of new users are entering the "Local AI" space, potentially increasing total DRAM demand and sustaining high price points.

The Hidden Cost: AI Compression Requires Extreme Thermal Stability

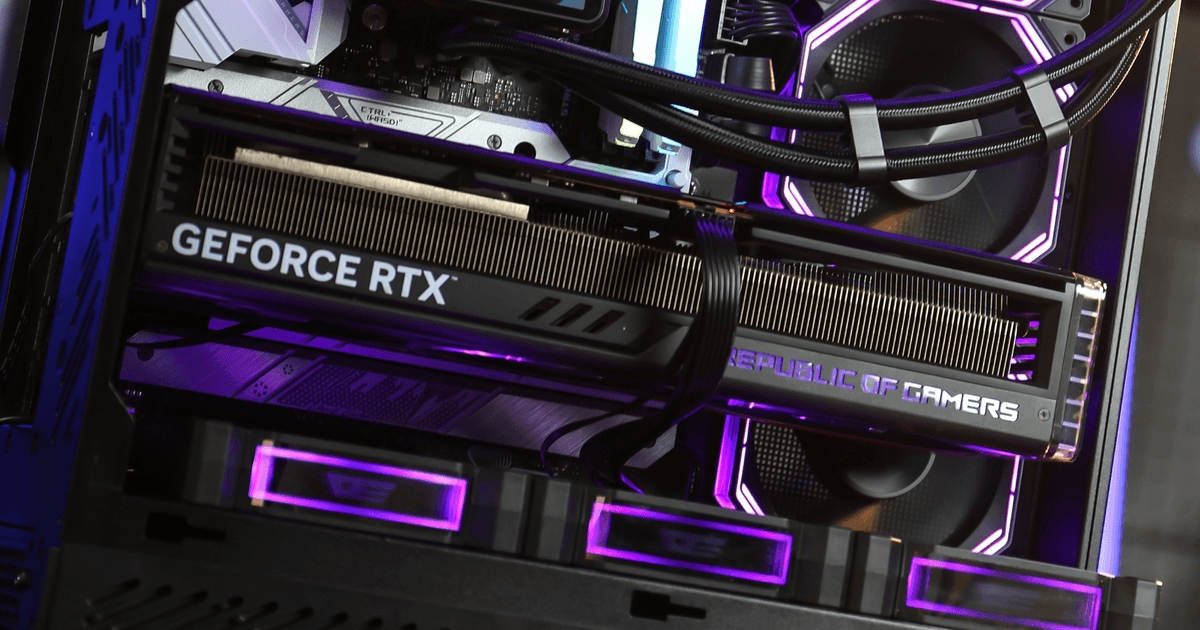

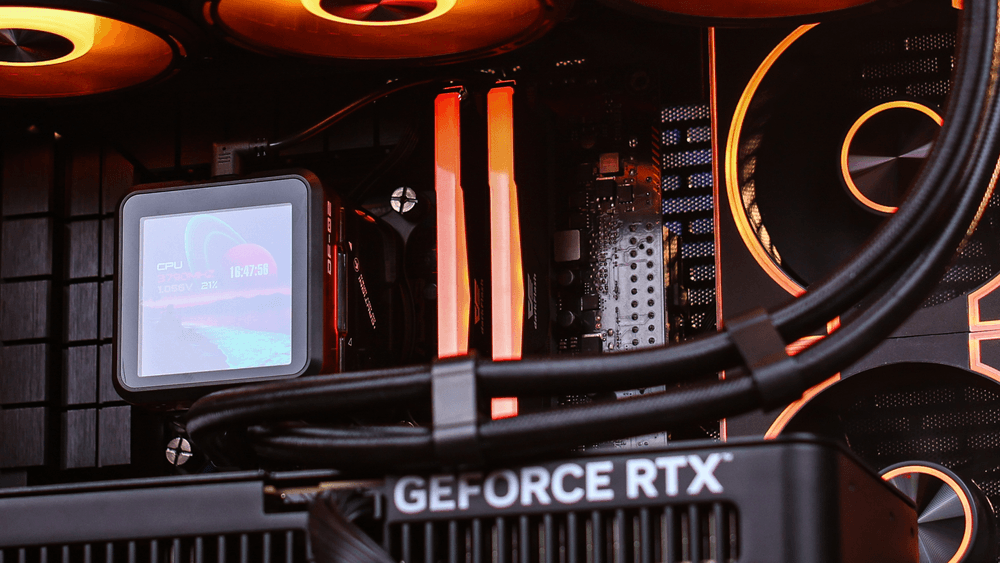

While TurboQuant saves you money on RAM capacity, the rapid "on-the-fly" decompression puts immense strain on your CPU and GPU silicon.

Instantaneous Thermal Spikes

Dynamic quantization requires constant cryptographic-like math. This creates "burst heat" that can cause traditional air coolers to fail. A 360mm AIO is essential to absorb these heat spikes and prevent AI inference lag.

Power Ripple Management

The rapid switching of AI logic gates during TurboQuant execution causes massive transient power fluctuations. Only an ATX 3.1 PSU (like the darkFlash PMT Series) can provide the clean, ripple-free voltage needed to prevent system crashes during 24/7 AI workloads.

Conclusion: Software Salvation or Hardware Trap?

Google TurboQuant is the most significant software-driven "hardware hack" of 2026. While it may not instantly crash the price of RAM, it gives builders a way to fight back against the 2026 Memory Crisis. To leverage this tech, focus your budget on a stable cooling and power foundation from darkFlash, and let the AI models do the rest.